|

|

If I can't picture it, I can't understand it."

-- Albert Einstein

Vision

Let's think about human vision for a moment. The eye receives an image, and hundreds of thousands of fibres in the optic nerve simultaneously send to the brain signals that, taken together, represent the whole scene. Human vision uses an abundance of "channels," all at once.

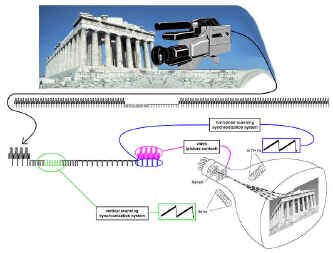

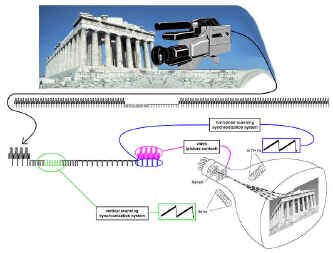

In television however, the entire scene must be sent through a single channel. Think of it as a serial process, sent down a series circuit. Within the camera an electrical signal is formed to represent the changing brightness and colour of each part. This signal is sent to the monitor. At the monitor the signal is transformed back into light, and the image is assembled on the viewing screen in its proper relative position.

|

|

In the television system, the picture we want to see is "scanned" sequentially, top to bottom, left to right. This repetition occurs at a rate of approximately 30 times every second, so we say that television runs at a rate of 30 frames per second.

Even though the picture elements are laid down on the screen one after the other, they all must be perceived at once. This requirement is met by persistence of vision - a property of the eye. When light entering the eye is shut off, the impression of light persists for about a tenth of a second. So, if all the picture elements in the image are presented successively to the eye in a tenth of a second or less, the whole area of the screen appears illuminated, although in fact only one spot of light is present at any instant. Activity in the scene is represented, as in motion pictures, by a series of still pictures, each differing slightly from those preceding and following it.

|

The perception of motion comes to us by a series of still images - Muybridge�s famous �galloping horse� experiment of the late 1800s

|

Black and White Television

Electrons

Before we go on further in our discussion of video, let's take a moment to have a look at electrons, since they are the basis of this whole television business anyway, and a quick overview will be invaluable in our discussion of monitors and TV sets later on.

The electron is often described as a "particle of electricity." The characteristic that we care about for now is that an electron has an electric charge. By the way, an electric current down a wire is a flow of electrons, too.

Electrons were discovered in 1895 by Joseph J. Thomson, a British physicist, in the form of cathode rays - actually a stream of electron particles. What's really interesting about cathode rays is that they can be deflected by magnetic and electric fields. An electron is essentially weightless - it has a mass of about 9.1083 X 10-28 grams.

Let's now try to do something useful with this stream of electrons. If we move them in a particular pattern across our picture tube, we get:

Scanning

The process of breaking down the scene into picture elements and reassembling them on the screen is known as scanning. It's like your eye's motion when you read this page. In scanning, the scene is broken into a series of horizontal lines.

|

The principle of scanning (note how each line breaks down the scene into discrete elements of picture intensity)

|

|

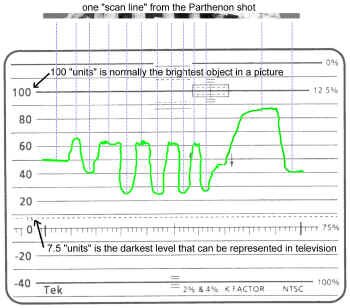

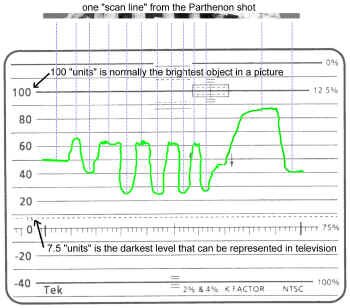

A voltage created as the camera scans across one line of the Parthenon shot

|

At The Camera

The camera "reads" the topmost line from left to right, producing a series of electrical signals that corresponds to the lights and shadows along that line. It then passes back to the left end of the next line below and follows it in the same way. In this fashion the camera reads the whole area of the scene, line by line, until the bottom of the picture is reached. Then the camera scans the next image, repeating the process continuously. The television camera has now produced a rapid sequence of electrical impulses; they correspond to the order of picture elements scanned in every line of every image.

|

At The Monitor

At the television monitor this signal is recovered and controls the picture tube. The picture tube creates an image that is composed of horizontal lines just like those produced in the camera. As the camera examines the topmost line, a spot of light produced by the picture tube moves across the screen and produces the topmost line of light on the screen. The video signal causes the spot of light to become brighter or darker as it moves, and so the picture elements scanned by the camera are reproduced line by line at the monitor, until the whole area of the screen is covered, completing the image. Then the process is repeated.

Try This At Home!

Go to your friend's place. The one who didn't get the loan of the colour TV when they moved to Toronto, so they have the clunker black and white set instead. Take a magnifying glass with you.

Turn on the set, tune in a channel, and hold the magnifying glass up to the screen. Notice the scanning lines that make up the picture. If you're friend asks what you're doing, tell 'em you're practising your Sherlock Holmes impression.

By the way, this doesn't really work on a colour TV, but there's a nifty thing we can look for, that we'll mention later on.

Interlace

|

Field One

|

Field Two

|

Interlaced Together...

|

Because the phosphor coating on the picture tube can only keep the picture information for a certain amount of time, flicker results if scanning from top to bottom only occurs at 30 frames per second. To avoid flicker, each still picture is presented twice by a process known as interlaced scanning. After the topmost line is scanned, space for another line is left immediately below it, and the next scanned line appears just below the empty space. As the scanning proceeds, alternate lines are scanned, with empty spaces between them. This represents the first showing of the still picture. The next image also consists of spaced lines, and its lines fall precisely in the empty spaces of the preceding image, so the whole screen is filled by the two sets of interlaced scanning lines. These two sets of scanning lines are called fields. There are two fields to each frame of television scanning.

The Sawtooth Wave

Consider for a moment the actual trace of the electron beam in either a camera or a picture tube. It goes evenly from left to right, then snaps back quickly to the left. The process repeats itself over and over. To make the beam do this, we apply a scanning voltage to coils of wire around the neck of the tube that act as electromagnets moving the beam around. The scanning voltage is called a sawtooth and looks like, well, the teeth of a saw - a smooth gradual ramp in voltage, followed by a sudden return to the start of the ramp again. The electron beam moves from left to right across the screen, and then rapidly back, following the wave shape of the scanning voltage.

The vertical scanning in the television system is done similarly, as the beam moves 60 times a second from the top of the screen to the bottom. We have to control these sawtooth waveforms in some way.

|

Sawtooth wave

Basic television scanning process (click on the picture for a bigger view)

|

Sync Signals

We have already created a constantly changing voltage called the "video" signal. In its primitive form, it is just the changes in an electrical signal that represent the light and dark areas of a scene. These signals go from an arbitrary "zero percent" or "no light level" to "100 percent" or "maximum light level"; our scale is actually from 7.5 to 100. As this signal is applied to a CRT electron beam, it reproduces with light the various areas of the picture.

|

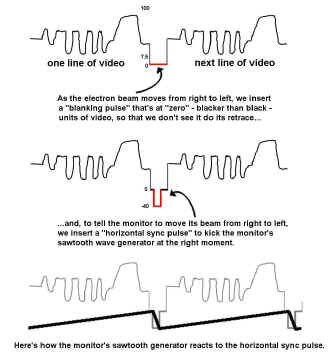

How we tell monitors how to scan from one line to the next

|

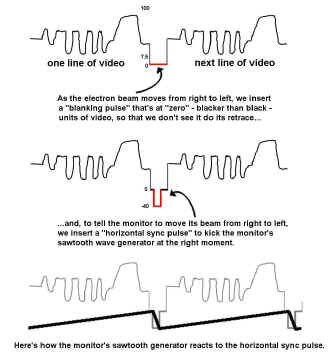

Horizontal Interval

But when we want to retrace the scan line back to the left again, we don't want to see it - we want it "blanked" out. So, during the "right to left" retrace period, we insert into our video signal a "horizontal blanking pulse" (at "zero units," so we won't see the retrace).

This is fine, but to ensure that our sawtooth doesn't drift off frequency over a long time (which would "skew" our picture in unpredictable ways), we send, within the horizontal blanking period a "horizontal sync pulse." This can be used to feed the sawtooth generator circuit, to give it a jolt, to re-synchronize it at the end of every line. We'll place this sync pulse at an intensity where it can easily be detected by the sawtooth circuit, and will never be seen by the viewer - at "-40" on our relative scale. The pulses will be sent 15,734 times a second (one for each line of video).

|

|

The vertical interval, featuring blanking, vertical sync pulse, and equalizing pulses (click on the picture for a bigger view)

|

Vertical Interval

A similar process occurs with the vertical sweep. A vertical sync pulse is created. This pulse triggers the second sawtooth wave generator - the one that controls the "top to bottom, and back to the top" part of scanning. It tells this generator "better make your way back to the top of the screen now." The shape of the vertical sync pulse is actually six small pulses. It's made up this way to provide synchronization for the horizontal sawtooth generator during the vertical retrace period.

In addition to the vertical sync pulses, another group of pulses is required when using interlaced scanning. Interlacing occurs because the second field of scanning starts half a line's distance across the screen, relative to the first field. This means that the vertical sawtooth voltage (inside the monitor) for one field must occur one half line later than for the other one.

Now, since our vertical sweeps are locked into the vertical sync pulses, they must occur one half line after the last horizontal sync pulse in one field, and one full line after the last horizontal sync pulse in the other field. One group of six "equalizing" pulses precedes the vertical sync pulse to allow this to happen properly; another group follows it. So, the equalizing pulses make interlacing happen and start the scans at the proper points in each of the two video fields.

|

No horizontal hold, due to missing horizontal sync pulses |

Vertical roll due to lack of luck with vertical sync pulse |

|

The vertical intervals at the end of each field differ a bit...

|

There's one more thing you should realize: there are two vertical intervals, one after the first field, and another after the second field. They differ slightly. Note that in Fig. A, the first field finishes after a half line of video; the first equalizing pulse is now displaced only a half line away from the last horizontal sync pulse in the previous field. Likewise, the first line of video is only a half line. The second field (Fig. B) is completed with a full line of video, and its corresponding vertical interval begins with a full line of video (which is the start of field one, again.) Otherwise, the vertical blanking intervals for the even and odd line fields are identical. |

| An interesting point for pay-TV subscribers: often, scrambled video is just video without the sync - what you may be paying for is the horizontal sync signal! |

Scrambled pay-TV

|

How the parts of the composite video signal affect the monitor (click on the picture for a bigger view)

Try This At Home!

Okay, you can try this stuff on any TV set...

1. If your set has a "vertical hold" knob, rotate it - go ahead, it won't bite. You'll see the vertical interval (that's what that "black bar" is when your set goes wonky.) Look at it real close. Try and find the parts you just read about. Get acquainted with it; make it your friend.

2. Turn on some scrambled pay-TV. Watch the horizontal and vertical intervals jump all around.

3. Put the set back the way you found it when you're done, or your room mates will be really annoyed with you.

Colour Television

|

The additive colour wheel

|

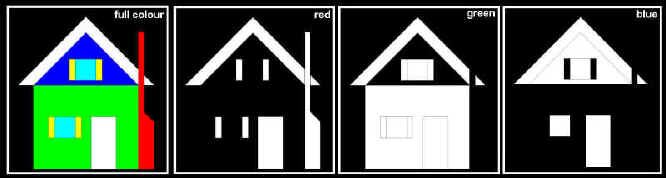

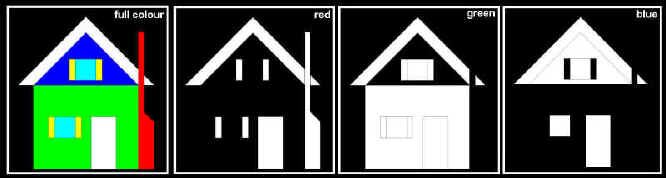

Colour television employs the basic principles of black-and-white television. The essential difference is that a colour picture is like three pictures in one.

The screen of a colour monitor, in effect, displays three images superimposed on each other. These images present, respectively, the red, green, and blue components of the colours in the scene. Colour television achieves reproduction of the wide range of natural colours by adjusting the relative brightness of these red, green, and blue images.

If two images are suppressed (for example, red and green), only the remaining colour (blue) is seen. If one image is suppressed (for example, blue), the other two (green and red) can cover the range of colours from green to red, including the intermediate colours orange and yellow, by changing the proportions of the red and green channels. When all three colours are present in the proper proportions, white light is produced - in fact, the whole range of grays from black to white can be reconstructed. By allowing one or two of the three colours to predominate, the white light can be given the tint of the stronger colours - pastel shades can be reproduced.

This colour representation process is called the additive colour system.

|

Colour Picture Tubes

At the rear of the picture tube is the electron gun, which produces three separate beams of electrons. These three beams hit the coloured dots, and the tube is designed so each beam can hit dots of only one colour; a mask prevents each beam from striking the others' colour dots. Because the coloured dots are so small that they cannot be seen separately by the viewer, the effect is three superimposed images in the primary colours. By adjusting the strength of the respective beams of electrons, the relative brightness of the image produced by each can be changed.

|

Colour picture tube cutaway, showing electron gun, shadow mask and arrangement of phosphor dots(courtesy Broadcast Engineering)

|

Try This At Home!

If you look at the screen of your colour TV set with a magnifying glass (turn it on, first), you can easily see the red, green, and blue phosphors at work. If you don't have a magnifying glass, try spraying the front of the screen with window cleaner (heck, your TV set screen probably needed cleaning anyway...)

Try This In A Bar!

Go into a bar some quiet night, and, if you can, walk through the three beams that make up the picture on the bar's projection TV (or, just put your hand in front of the lenses on the projector.) Notice how the red, green, and blue projections converge together to make the picture on the screen. Notice, also, how you now have to dodge the beer bottles being pitched by those patrons who are trying to watch the hockey game!

|

"Front end" of a modern CCD camera

|

Colour Cameras

The three electrical signals that control the respective beams in the picture tube are, these days, produced in the colour television camera by three CCD (Charge Coupled Device) integrated circuit chips.

The camera has a single lens, behind which a set of prisms or special dichroic mirrors produce three images of the scene. These are focused on the three CCDs. In front of each one is a colour filter; the filters pass respectively only the red, green, or blue components of the light in the scene to the chips. The three signals produced by the camera are transmitted to the respective electron guns in the picture tube, where they re-create the scene.

|

Separation of full colour picture into red, green, and blue images

Three Channels of Colour - One Wire?

One way of connecting the camera to the picture tube is to use three separate cables, one for each of the primary colour signals. In fact, a computer monitor takes the separate channels of red, green and blue sent by the computer's video card and displays them directly on the screen. To broadcast colour programs by this method, however, would require each station to use three channels. The number of channels available for television is so limited that this method is impractical. Also, if a black-and-white TV receiver were to tune in on such a colour broadcast, it could receive only one of the three channels, and the grey-scale values reproduced would be unnatural. There has to be a better way.

Making Luminance

Let's start by creating the luminance information from these colour channels. It's produced by adding, electronically, the three signals from the colour camera, in the ratios 30% red, 59% green, and 11% blue. The luminance signal is what a black-and-white broadcast is like, so the black and white receiver, which interprets only this signal, gives a correct rendition of the broadcast.

We already know how to send a composite signal (incorporating black and white information and synchronization signals) down one wire. How can we add colour and still keep the whole process compatible with our black and white system?

Right now, with the invention of black and white television, we have a signal which has a full range of frequencies between about 30 Hz and 4,200,000 Hz (4.2 MHz) - that's how we transmit details in the television picture. Supposing we were to "borrow" a continuous sine wave, of a very particular high frequency within that range, and somehow use it to transmit colour information? How would we use it?

A Special Carrier Wave

One thing we can't do with this wave is change its frequency - that aspect of a wave is how we tell the television system about the details in a scene. However, there are two other things we can do with this high frequency sine wave - change its amplitude (level) and change its phase. If, somehow, we could give this signal attributes that corresponded to how saturated a colour was, and what particular hue it was, we could superimpose this signal on the luminance, and send them both down the same wire. And that's what we do - with a device called a colour encoder.

We have, in fact, chosen a particular frequency to represent colour information, and it is exactly 3.579545 MHz, but you can remember it as 3.58 MHz. This is high enough a frequency so it won't be seen on black and white television sets (except as occasional small "dots"), but will still be within the bandwidth of what we're allowed to transmit over the airwaves.

Through a process involving manipulation of this high frequency (using the variations present within the colour and luminance signals), we are able to produce what we call "colour subcarrier" that has within it all of the possible hues ("which colours") we would ever want to reproduce, and also information about how saturated ("colourful") our colours are.

A Burst of Colour Information

There is also a separate reference "colour burst" that is added at the beginning of each video line, just after the horizontal sync signal. This is a short blip of colour subcarrier, and is used as a reference to give the colour monitor a "starting point" as to which colour is supposed to be represented, and how saturated that colour is. As various hues are displayed based on what phase of subcarrier is being transmitted, the NTSC designers have decided that colour burst will be sent at 180 phase.

Mix Ingredients Thoroughly...

This colour signal (the colour subcarrier, continuously changing its amplitude and phase, and the colour burst itself) is mixed with our already available black and white signal, so that the entire composite can be sent down a single wire.

The Colour Encoding Process - In Detail

Want to know how the colour encoding process really works? In all the nitty-gritty detail? For a description of the colour encoding process in all its glory, please refer to the Appendix. Here's a thought - if you aren't sure about this colour encoding stuff, sit with a friend and work through the section in the Appendix. It does no harm to have a look, and something may come clear to you that you hadn't understood before.

One last hitch about this colour encoding stuff and then we're done - honest.

Video Is Not 30 Frames Per Second???

Up until now, we've been saying how television scans 525 lines in 1/30 of a second. Well, that's not exactly true. You see, it was true in the days of black and white television. But, to keep the visibility of the colour subcarrier in the monitor to a minimum (the "little dots" we referred to earlier), a couple of the specifications got changed.

In black and white television, 525 lines scanned in 1/30 of a second gave us a line scan rate of 15,750 Hz (525 x 30). With the invention of colour television, that was changed to be precisely related to the subcarrier frequency - 2/455 of it, in fact - which made it 15,734 Hz (2/455 x 3,579,545 Hz).

Having changed the line scan rate, the frame rate also had to change, from 30 frames a second, to 29.97 frames a second (15,734 / 525). You might think that this doesn't matter too much - after all, 29.97...30...whateverrr...close enough, right? But when you start editing videotape, on the frame, these little discrepancies have a tendency of adding up, making your show too short or too long. This problem comes back to haunt us when we start thinking about time code (a frame numbering system used in editing.) See the chapter on Editing for more details on this conundrum.

Transmitting The Image & NTSC Resolution - A Little History, and A Look To The Future

Back in 1936, most of the new science and technology involved in television had been worked out by two committees of the Radio Manufacturers Association (RMA), and in the year before, RCA had demonstrated a fully electronic 343-line television system.

In 1936 (before there was an NTSC), the committees decided: the television transmission channel should be 6 MHz wide. This was a huge chunk of radio frequency spectrum to be assigned for each channel - it was 600 times as wide a band as an AM broadcast station used, but it still allowed lots of channels, with reasonable resolution in each.

Most of the channel is used to transmit the primary video signal, which occupies a sideband of 4.2 MHz (as decided by the RMA Television Standards Committee in 1938) - the other portions of the channel are required for a vestigial sideband of the video signal and for sound transmission. It's the standard by which we live today, and as such, puts very real limits onto the maximum resolution of NTSC television.

So, what are the limits of NTSC resolution? This is a question hotly debated by everybody from broadcast engineers to audio/video salesmen, so we'll tread safely on some known planks of information.

Vertical Resolution

Let's start with the easy one: vertical resolution. We speak of NTSC video as having 525 scanning lines. This number includes 42 of them (21 per field) that are blanked out and used up during periods of vertical retrace (the electron beam has to get from the bottom to the top of the frame, and this takes a certain amount of time). Therefore, we really have 483 lines available to us for picture material. Now, consider a test chart with a series of fine black and white lines, running horizontally. Place this in front of a camera. How many lines can you see on the screen before they begin to blend?

If we're really, really careful, we might just be able to scan exactly 483 black and white lines - one chart line being scanned exactly by one line of video. The odds of this happening are pretty dismal. In fact, if by some chance, we vertically re-position the chart within the camera's framing just a little bit, so our video scanning lines each straddle a black and a white line, the result will be a totally grey screen with no lines visible! As we play around with this game of chance, it turns out that we can successfully reproduce 340 lines as often as we like. That's our practical vertical resolution. This fooling around with horizontal stripes and a TV camera that we have just done can be mathematically estimated, and it has a name. The Kell factor, as it's called, is equal to .7, so, if we use it, we get 483 x .7 = 340 lines.

|

Using a test card with a series of horizontal lines, to check vertical resolution of NTSC TV

|

|

Using a test card with a series of vertical stripes to test the horizontal resolution of NTSC TV

|

Horizontal Resolution

Now comes the trickier one: horizontal resolution. The arithmetic on this is a little deeper, so bear with me as we go through it.

We think of our NTSC scheme as a 525-line, 29.97 frame per second, television system, that takes 1/15,734 of a second for each line to scan across the screen.

Some of that scan line time is used to get the electron beam back from the right side of the screen over to the left again. This is called horizontal retrace, and it leaves us with a practical visible scan line that takes about 1/18975 of a second to track across the screen from left to right. (1)

If we take our 4.2 MHz (4,200,000 cycles per second) of bandwidth that we're allowed at transmission, and divide it by the 1/18975 of a second that we have to display our video in (4,200,000 / 18975), we get about 220 cycles of signal per line.

Yuck. Television pictures would look pretty chunky if you only were allowed a little more than 200 picture elements per line. But these are cycles, not pixels. Consider that a "cycle" is a positive going voltage followed by a negative going voltage - like in audio - and we represent video by a series of ever-changing voltages corresponding to the light level read by the camera.

If we were to take our "bunch of black and white lines" chart and tip it so the lines were vertical, how many lines (black lines and white lines) would we see before we blurred? Each black line would be a low voltage, followed by each white line - a high voltage. That would be one "cycle." So with 220 cycles available to us, we could see 440 lines of video across the screen. That's more like it.

To re-cap then, the practical resolution of our broadcast transmission environment is 340 lines top to bottom, and 440 lines left to right. (2)

|

TV Lines Per Picture Height (TVL/PH)

We're now going to express these two resolutions a little differently, since with DTV and HDTV, we'll be dealing with screen sizes that aren't always 4:3. Today, we refer to resolutions expressed in "TV lines per picture height" (TVL/PH). What this means is that we take a "square" piece of the television picture (an area with equal height and width) and see how much resolution we have in the horizontal and vertical directions within that shape. This makes a certain amount of sense in that we are comparing the same distance in each direction on the television screen and speaking of the relative resolution in each of those directions. This makes it possible for us to compare NTSC resolution to DTV digital resolution to HDTV resolution since we're looking at the same square for all these formats.

Let's try it with NTSC. Since our picture here is 4:3, we'll take a piece of the picture that's essentially 3:3. This makes the vertical resolution number easy to figure out: it's the same as the full height of the 4:3 screen, or 340 TVL/PH. For horizontal resolution, let's make our way across the screen, left to right, for the same distance, or about 3/4 of the way across the NTSC screen. That will give us 440x.75=330 TVL/PH. You'll notice how the horizontal and vertical resolutions (330 vs. 340 TVL/PH) are almost identical, resulting in what could be thought of as "square pixels" on the screen, with equal resolutions in both directions.

The next time you walk into a mega-hi-fi/video store and the salesperson tries to sell you an expensive TV set with "over 600 lines of resolution", just remember to ask them why this is necessary, since no broadcaster sends out that much resolution in the first place...I wonder what their answer would be? Oh, and while you're at it, impress 'em with your new knowledge of "lines per picture height".

Things To Think About:

The purpose of our television system is to allow us to send television down a single transmission channel.

Some parts of the process to do this include scanning, interlace, synchronization signals, and, in the case of colour television, colour encoding of separate colour channels of picture information.

This system has inherent within it certain limits of resolution. What are those limits?

1. This figure may vary from 1/18832 to 1/19048, depending who you talk to and whether they're considering doing this calculation based on minimum or maximum time for horizontal blanking (retrace).

2. Some engineers do the calculations a little differently than I've done them here - your mileage may vary.